Getting Started

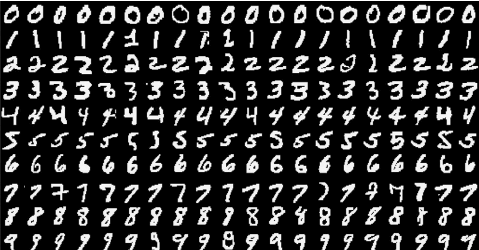

This guide will walk you through how to build a simple neural network in Razorthink AI for classifying images of handwritten digits in MNIST dataset. Various features of the platform will be introduced to you as you follow through this guide.

This guide will walk you through how to build a simple neural network in Razorthink AI for classifying images of handwritten digits in MNIST dataset. Various features of the platform will be introduced to you as you follow through this guide.

Table of contents:

Importing MNIST Data

MNIST is a collection of 60,000 images for training and 10,000 images for testing.

Though the original dataset from here contains actual images, we've converted them to CSV for simplicity of this guide. Download and use one of the following files for the rest of the guide:

- mnist_train_sample.csv (2.1 MB)

- mnist_test_sample.csv (2.2 MB)

Razorthink AI Feature: Workspace

Workspace is data storage and collaboration facility in Razorthink AI. You can store your data files and notebooks in My Space section of the workspace. Storing files in Community Space allows you to share and collaborate with other users of the platform.

Once you've uploaded the dataset to Workspace, we can move on to preparing it for training the neural network.

Transforming Data

Each image in the dataset has a dimension of 28x28, that is, 784 features per image. Here's a sample record from the dataset:

| ID | Label | F1 | F2 | ... | F783 | F784 |

|---|---|---|---|---|---|---|

| 0 | 3 | 0 | 116 | ... | 0 | 0 |

| 1 | 6 | 0 | 125 | ... | 171 | 0 |

| 2 | 1 | 0 | 0 | ... | 255 | 0 |

The first column in the dataset is the Label for the handwritten digit in that record. We need to convert this column to its one-hot equivalent in order to train the network.

Razorthink AI Feature: Data Recipe

Data Recipe provides a visual interface for creating a pipeline of data transformation operations. You can also perform Exploratory Data Analysis (EDA) and also preview the data as you add transformation operations.

Designing the DL Model

Razorthink AI Feature: DL Model Designer

DL Model Designer provides a visual interface for composing deep learning models with pre-built layers such a CNN, FNN, LSTM, RNN and many more. It also supports creating a non-trivial training and inference flow for complex models such as GAN.

Training the DL Model

Razorthink AI Feature: Pipeline Builder

Pipeline builder allows you to easily put together complex flows involving data transformations, model training and inferencing through a drag-and-drop interface.

Running inference on the trained model

coming soon